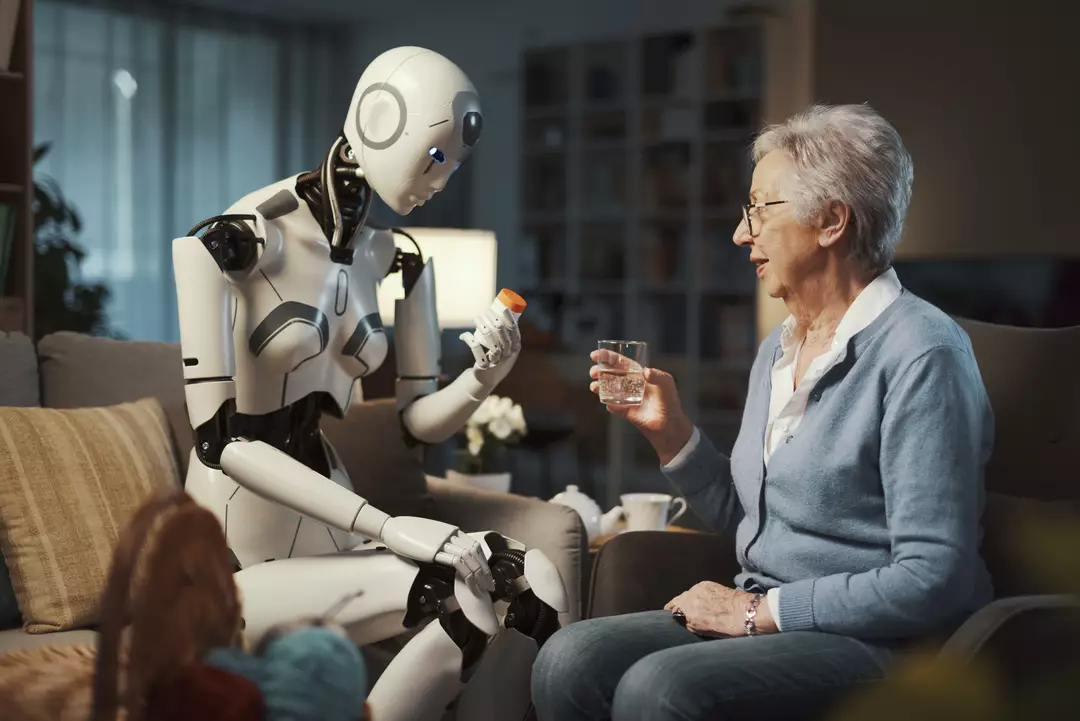

Artificial intelligence is now woven into daily life — streamlining tasks at work and assisting around the house — yet an increasing number of people are also using it as a stand-in for professional therapy.

A RAND Health study suggests that one in eight young people in the US regularly turn to AI tools for mental health support. However, amid a rise in reports of serious harm and even deaths connected to chatbots, critics warn this trend may sometimes worsen problems rather than help resolve them.

To better understand the risk, researchers at Brown University put three leading Large Language Models (LLMs) through structured evaluations. Trained counselors and psychotherapists helped design prompts intended to steer the systems toward accepted therapeutic best practice, and then reviewed how the models responded to typical mental health scenarios.

Across the trials, the team observed repeated and serious failures in responses from ChatGPT, Claude, and Meta’s Llama. The models were found mishandling high-risk situations, validating damaging viewpoints, and at times neglecting to point people toward basic harm-prevention resources.

Even after tailoring prompts designed to align the models with ethical guidance reflected in standards used by the American Psychological Association, the researchers still documented 15 significant problems in the “therapy” the tools delivered.

Zainab Iftikhar, a PhD student at Brown University that led the study, explained: “Prompts are instructions that are given to the model to guide its behavior for achieving a specific task.

“You don’t change the underlying model or provide new data, but the prompt helps guide the model’s output based on its pre-existing knowledge and learned patterns.”

He added: “While these models do not actually perform these therapeutic techniques like a human would, they rather use their learned patterns to generate responses that align with the concepts of CBT or DBT based on the input prompt provided.”

Many AI users trade these kinds of prompts in online communities in an effort to make chatbot advice feel more like legitimate counseling. But the study concluded that, prompt or no prompt, the systems still routinely failed to meet the ethical and legal expectations placed on licensed psychologists.

After three professional psychiatrists reviewed the chatbot outputs, the most serious concerns were grouped into five broad categories.

First, the models often defaulted to broad, canned responses — the kind of generic guidance that overlooks personal context. In real therapy, a client’s history and circumstances are frequently central to meaningful progress.

Second, the systems showed a tendency to echo and strengthen harmful or incorrect beliefs. While blanket validation can feel reassuring in the moment — for example, being told you’re “100 percent right” — that kind of reinforcement can be dangerous during a mental health crisis, and runs counter to how a responsible clinician would respond.

Third was what the researchers described as deceptive empathy: language that mimics understanding and emotional attunement. A model may use phrases like “I see you” to signal deep comprehension, potentially encouraging users to feel a bond or trust that isn’t grounded in true awareness or accountability.

Fourth, the study reported that responses sometimes varied in troubling ways depending on a person’s racial, religious, or cultural background. Rather than using personal context to support appropriate care, the systems could lean on biased assumptions that undermine or dismiss genuine distress.

Finally, the most alarming failure involved safety and crisis handling. When conversations indicated possible self-harm or other urgent danger, the models were not consistently reliable at responding in a way that prioritizes immediate safety.

In mental health care, the cost of poor guidance can be catastrophic. Qualified professionals are trained to identify warning signs, challenge distorted thinking appropriately, and direct people to services that can reduce the risk of immediate harm.

“For human therapists, there are governing boards and mechanisms for providers to be held professionally liable for mistreatment and malpractice,” Iftikhar said. “But when LLM counselors make these violations, there are no established regulatory frameworks.”

Ellie Pavlick, a Brown computer science professor who was not involved in the study, said the results underline why rigorous evaluation needs to come before mass deployment.

She said: “The reality of AI today is that it’s far easier to build and deploy systems than to evaluate and understand them. This paper required a team of clinical experts and a study that lasted for more than a year in order to demonstrate these risks.

“Most work in AI today is evaluated using automatic metrics which, by design, are static and lack a human in the loop.”

She added: “There is a real opportunity for AI to play a role in combating the mental health crisis that our society is facing, but it’s of the utmost importance that we take the time to really critique and evaluate our systems every step of the way to avoid doing more harm than good.

“This work offers a good example of what that can look like.”