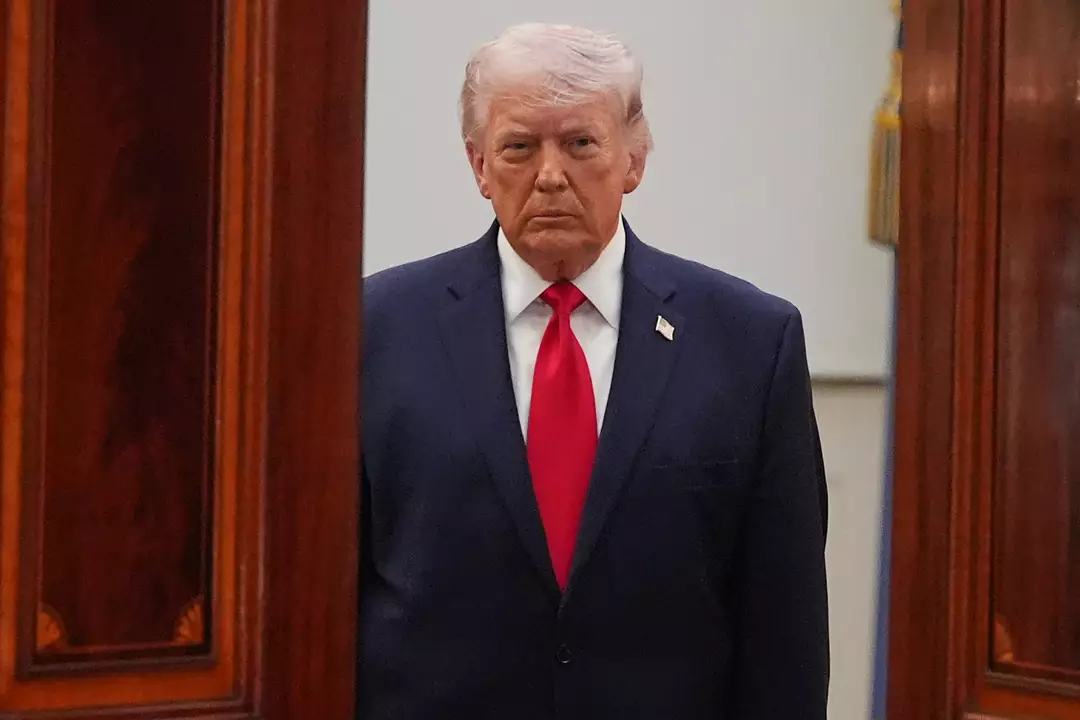

Donald Trump has met with senior US banking figures to discuss how artificial intelligence could threaten the security of the financial system.

He attended a session at the Treasury Department’s headquarters in Washington DC in April, alongside Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell.

Bloomberg reported that the gathering was convened for banks considered systemically important in the United States and internationally.

According to the Daily Mail, the attendee list included Bank of America CEO Brian Moynihan, Goldman Sachs CEO David Solomon, Citigroup CEO Jane Fraser, Wells Fargo CEO Charlie Scharf, and Morgan Stanley CEO Ted Pick.

The discussion reportedly focused on Anthropic and a newly developed AI model from the company called Claude Mythos.

Anthropic has raised alarms about what the technology may be capable of, warning that it could potentially be used to break into protected systems.

In a blog post about the model, the company wrote: “AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.”

It cautioned: “The fallout – for economies, public safety, and national security – could be severe.”

As an example, a case study described Claude Mythos uncovering an issue in OpenBSD that had apparently gone unnoticed for 27 years.

OpenBSD is widely regarded as security-focused, and the flaw had not previously been identified by human experts.

After the weakness was found, it was said that an attacker could remotely crash computers just by connecting.

In another reported scenario, the model was able to chain together multiple weaknesses in the Linux kernel.

Because the Linux kernel underpins a large share of the world’s servers, Anthropic warned this kind of vulnerability could enable a hacker to ‘escalate from ordinary user access to complete control of the machine’.

Dr Roman Yampolskiy, an AI safety researcher at the University of Louisville, argued that the very creation of such a model is concerning.

Speaking to the New York Post, Dr Yampolskiy said: “Ideally, I would love to see this not developed in the first place. And it’s not like they’re going to stop.

“That’s exactly what we expect from those models – they’re going to become better at developing hacking tools, biological weapons, chemical weapons, novel weapons we can’t even envision.”

The emergence of these capabilities has intensified concern across the global financial sector, with industry leaders weighing the potential knock-on effects for cybersecurity and broader economic stability.